Voice AI just crossed a major line.

On March 26, 2026, Google officially launched Gemini 3.1 Flash Live — its most advanced real-time audio and voice model ever built. It’s already powering Gemini Live on Android and iOS, rolling out across Search Live in over 200 countries, and opening up to developers through Google AI Studio.

The headline? Google says this is a “step change in latency, reliability, and more natural-sounding dialogue.” In plain terms: it’s faster, it understands you better, it handles noise and interruptions, and it can hold a much longer conversation without losing the thread.

This isn’t a minor update. It’s a fundamental shift in what AI voice assistants can do — and it has serious implications for everyday users, developers, and businesses alike.

Here’s the complete breakdown.

1. WHAT IS GEMINI 3.1 Flash Live?

Gemini 3.1 Flash Live is Google’s newest real-time voice and audio AI model. It replaces Gemini 2.5 Flash Native Audio as the engine powering Google’s live voice experiences — including Gemini Live (Google’s AI assistant) and Search Live (real-time conversational search with camera support).

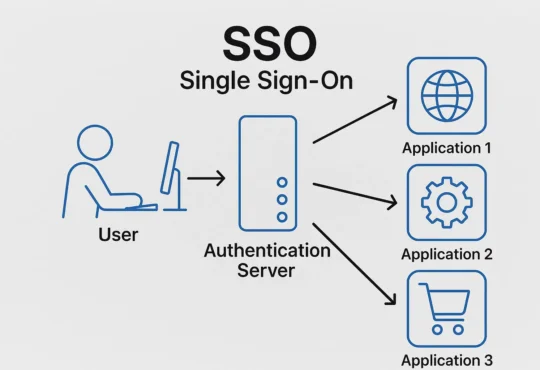

Unlike traditional voice assistants that process speech in stages — listen, then transcribe, then think, then respond — Gemini 3.1 Flash Live collapses that entire chain into a single native audio-to-audio process. The result is dramatically lower latency and far more natural-sounding conversation.

It launched on March 26, 2026 and is available in:

→ Gemini Live (Android and iOS apps)

→ Google Search Live (200+ countries via the Google app)

→ Gemini API (for developers)

→ Google AI Studio (for builders and enterprises)

→ Gemini Enterprise for Customer Experience

2. KEY NEW FEATURES — WHAT’S ACTUALLY CHANGED

Here is every meaningful upgrade in Gemini 3.1 Flash Live compared to its predecessor:

LOWER LATENCY — FEWER AWKWARD PAUSES

The most immediate improvement is speed. Google has significantly reduced the response time between you speaking and Gemini responding. The AI now reacts at the speed of natural conversation — which sounds simple, but has historically been one of the hardest problems in voice AI to solve.

Gemini 3.1 Flash Live achieves this by processing audio natively. It doesn’t transcribe your speech into text, run an LLM on it, then convert the response back to audio. It handles the entire chain as audio-in, audio-out — which cuts out multiple latency-inducing steps.

2X LONGER CONVERSATION MEMORY

Gemini 3.1 Flash Live can now follow the thread of a conversation for twice as long as the previous model. If you’ve ever had a voice assistant “forget” what you were talking about in the middle of a complex question or brainstorming session — this is the fix.

Google describes this as “keeping your train of thought intact during longer brainstorms.” Whether you’re planning a trip, working through a problem, or giving feedback on a design, the model keeps context without you having to repeat yourself.

BETTER TONAL UNDERSTANDING

This is the feature that makes conversations feel genuinely more human. Gemini 3.1 Flash Live is significantly more effective at recognising acoustic nuances — pitch, pace, rhythm, and emotional tone. It can detect when you’re confused, frustrated, or excited, and adjust its response style accordingly.

For example: if you’re speaking quickly and confidently, Gemini won’t slow you down with lengthy explanations. If you pause and sound uncertain, it shifts register to be more patient and detailed. This kind of dynamic adjustment is what separates a voice AI from a voice assistant.

SUPERIOR NOISE FILTERING

Real conversations don’t happen in recording studios. They happen in kitchens with TVs on, streets with traffic, and offices with background chatter. Gemini 3.1 Flash Live has been redesigned to filter out environmental noise and focus exclusively on relevant speech.

Google’s testing shows it outperforms the previous model in what it calls “noisy environments” — correctly identifying and responding to speech even when surrounded by the kinds of background sounds most voice AIs previously struggled with.

MULTILINGUAL — 90+ LANGUAGES IN REAL TIME

Gemini 3.1 Flash Live supports real-time conversations in more than 90 languages. This is described as “inherently multilingual” — meaning the model handles language switching naturally, rather than requiring separate language detection steps.

This capability is what has allowed Google to expand Search Live globally in a single move (more on that below).

BETTER INSTRUCTION FOLLOWING

Google says adherence to complex system instructions has been “boosted significantly.” For developers building AI agents, this means a voice agent will stay within its defined operational boundaries even when the conversation takes an unexpected turn — a critical requirement for customer service, healthcare, and finance applications.

IMPROVED TOOL USE AND FUNCTION CALLING

Gemini 3.1 Flash Live has shown major improvements in its ability to trigger external tools directly through voice. It scored 90.8% on ComplexFuncBench Audio — a benchmark for multi-step function calling through voice. This means an AI agent can reason through complex logic, like searching for a specific invoice and then sending it, entirely via a voice conversation.

SYNTHID WATERMARKING — BUILT-IN AI SAFETY

Every piece of audio generated by Gemini 3.1 Flash Live is embedded with SynthID — Google’s inaudible digital watermark. Humans cannot hear it, but software can detect it. This is designed to prevent AI-generated voice audio from being used to spread misinformation or impersonate real people.

This is a significant safety step, especially as AI-generated voice becomes increasingly indistinguishable from human speech.

3. BENCHMARK PERFORMANCE

Google has released two key benchmark results for Gemini 3.1 Flash Live:

COMPLEXFUNCBENCH AUDIO — 90.8%

This benchmark tests multi-step function calling through voice — essentially, can an AI agent accurately trigger external tools and APIs based on a spoken command, even when the logic is complex? A score of 90.8% is the highest published result for any voice model on this benchmark as of March 2026.

What this means for you: voice-controlled AI agents can now reliably complete complex multi-step tasks (find this file, analyse it, send a summary to this person) without needing to switch to text input.

SCALE AI AUDIO MULTICHALLENGE — 36.1% (WITH THINKING ON)

This benchmark is specifically designed to test whether an AI can stay on track during real-world conversation challenges: interruptions, hesitations, background noise, and unexpected topic changes. Gemini 3.1 Flash Live leads this benchmark with thinking enabled.

What this means for you: the AI won’t get thrown off when you change your mind mid-sentence, say “actually, wait,” or have a noisy environment around you. It handles the messiness of real speech better than any previous version.

4. WHAT IT MEANS FOR EVERYDAY USERS (GEMINI LIVE)

If you use Gemini Live on your Android or iPhone, you’ll notice the upgrade immediately. Here’s what changes in practical terms:

→ Responses arrive faster with fewer awkward pauses between you speaking and Gemini answering.

→ The AI dynamically adjusts its answer length and tone to match the moment. A quick factual question gets a short answer. A complex discussion gets a thorough one. You don’t need to specify.

→ Longer brainstorming sessions now stay coherent. If you’re planning a project, drafting an outline, or thinking through a problem over 10–15 minutes of back-and-forth conversation, Gemini tracks every detail and doesn’t lose the thread.

→ You can speak in your preferred language and switch naturally. The model handles multilingual conversation without needing manual settings changes.

→ Background noise no longer derails the conversation. You can use Gemini Live in your car, on the street, or in a busy environment.

HOW TO ACCESS IT:

Gemini Live is already updated with 3.1 Flash Live. Open the Gemini app on Android or iOS and tap the voice icon to start a conversation. No special settings required.

5. SEARCH LIVE GOES GLOBAL — 200+ COUNTRIES

One of the biggest announcements tied to Gemini 3.1 Flash Live is the simultaneous global expansion of Search Live.

Search Live lets you open the Google app on Android or iOS, tap the Live icon beneath the search bar, point your camera at anything, and have a real-time voice conversation with Google Search about what it sees. It combines voice AI with Google Lens visual recognition — and now it also processes video in real time.

Before March 26, 2026, Search Live was only available in the United States and India. As of launch day, it has expanded to more than 200 countries and territories — every location where AI Mode is currently available — in over 90 languages.

This is a massive shift in how Google Search works at a global scale.

WHAT YOU CAN DO WITH SEARCH LIVE:

→ Point at a restaurant menu and ask about allergens in a specific dish

→ Hold your camera up to a product in a store and ask comparative questions

→ Point at a street sign or landmark abroad and have a translated, contextual conversation about it

→ Ask follow-up questions in a continuous dialogue — not one-off queries

SEO NOTE FOR WEBSITE OWNERS: Industry data shows that up to 60% of searches in 2026 result in no website click. With voice and visual search expanding to 200+ countries, the shift toward zero-click, conversational answers is accelerating. If your content strategy is still purely keyword-based, this is your signal to begin optimising for conversational queries and featured snippet positioning.

6. WHAT DEVELOPERS GET (GEMINI LIVE API)

For developers, Gemini 3.1 Flash Live is available in preview via the Gemini Live API in Google AI Studio. Here’s what the technical architecture looks like and what you can build with it:

NATIVE MULTIMODAL ARCHITECTURE

The model collapses the traditional voice AI stack — Voice Activity Detection → Speech-to-Text → LLM reasoning → Text-to-Speech — into a single native audio-to-audio pipeline. This eliminates multiple round-trips and dramatically reduces latency.

STATEFUL BIDIRECTIONAL STREAMING

The API uses WebSockets (WSS) for full-duplex communication. This enables:

→ Barge-in: users can interrupt the AI mid-response, and it handles the interruption naturally

→ Simultaneous streaming of audio, video frames, and transcripts in the same session

→ Session management for long-running conversations without context loss

TUNABLE THINKING CONTROLS

Developers can adjust reasoning depth via the thinkingLevel parameter with four settings: minimal, low, medium, and high. This lets you balance conversational speed against response accuracy depending on your use case. A casual consumer assistant might use “minimal” for lightning speed. A financial advice agent might use “high” for careful reasoning.

REAL-WORLD APPS ALREADY BUILT WITH IT:

→ Stitch: Uses Gemini 3.1 Flash Live to enable voice-based design work. The AI can “see” a design canvas, give critiques, and suggest variations — all through conversation.

→ Ato: An AI companion device for older adults that uses the model’s multilingual capabilities to enable natural daily conversations in users’ native languages.

→ Wit’s End RPG: A role-playing game that uses Gemini 3.1 Flash Live’s characterisation and tonal delivery to power a real-time Game Master with theatrical flair.

DOCUMENTATION LINKS FOR DEVELOPERS:

→ Gemini Live API documentation: covers multilingual support, tool use, function calling, session management, and ephemeral tokens

→ Gemini Live API examples: voice experience inspiration

→ Google GenAI SDK: integration starting point

7. REAL-WORLD COMPANIES ALREADY USING IT

Google has already been working with enterprise partners to test Gemini 3.1 Flash Live in production environments. Early adopters include:

→ Verizon: Testing improved voice-based customer service interactions

→ The Home Depot: Exploring AI-powered customer support agents that handle complex, noisy retail environments

→ LiveKit: Integrating the model into real-time communication infrastructure for developers

These are not experimental demos — these companies are evaluating the model for direct deployment in customer-facing workflows. The implication: enterprise voice AI is moving from pilot to production faster than most predicted.

8. GEMINI 3.1 FLASH LIVE vs. CHATGPT VOICE MODE

The voice AI landscape in March 2026 has three dominant players: Google Gemini Live, OpenAI ChatGPT Advanced Voice Mode, and Apple with its Gemini-powered Siri overhaul. Here’s how they compare:

| Feature | Gemini 3.1 Flash Live | ChatGPT Advanced Voice |

|——————————-|—————————|—————————|

| Response latency | Very low (native audio) | Sub-320ms (strong) |

| Language support | 90+ languages | 50+ languages |

| Conversation memory length | 2x improved (leading) | Competitive |

| Visual / camera search | Yes (Search Live, 200+) | Limited |

| Google Search integration | Full native access | Not applicable |

| Tool use / function calling | 90.8% ComplexFuncBench | Strong, not published |

| AI safety watermarking | Yes (SynthID) | Not confirmed |

| Pricing (developer API) | $0.35/hr audio in | Usage-based |

| Noise filtering | Significantly improved | Good |

WHERE GEMINI WINS: Ecosystem integration — Gemini 3.1 Flash Live has native access to the world’s largest search index, combined with a visual search feature that no competitor currently matches at this scale. The benchmark score of 90.8% on ComplexFuncBench Audio also suggests stronger reliability for multi-step tool use and function calling.

WHERE CHATGPT STILL COMPETES: Raw conversational fluidity and brand familiarity. OpenAI’s Advanced Voice Mode remains highly competitive for natural back-and-forth chat, and ChatGPT’s wider user base means more people are accustomed to its particular conversational style.

Bottom line: for developers building voice agents that need to access real-world data, trigger tools, and scale globally — Gemini 3.1 Flash Live is now the strongest choice available.

9. PRICING

Google confirmed that pricing for the Gemini Live API is unchanged with this launch:

→ Audio Input: $0.35 per hour

→ Audio Output: $1.40 per hour

For everyday users, Gemini Live remains free via the Gemini app on Android and iOS. Gemini Advanced subscribers (Google One AI Premium) continue to receive full access to all Live features.

10. FREQUENTLY ASKED QUESTIONS

Q: What is Gemini 3.1 Flash Live?

A: Gemini 3.1 Flash Live is Google’s newest and most advanced real-time audio and voice AI model, launched on March 26, 2026. It powers Gemini Live on Android and iOS, Search Live globally, and is available to developers via the Gemini Live API in Google AI Studio.

Q: How is Gemini 3.1 Flash Live different from 2.5 Flash?

A: Gemini 3.1 Flash Live delivers lower latency, twice the conversational memory length, significantly better noise filtering, improved tonal understanding, and stronger instruction-following compared to Gemini 2.5 Flash Native Audio. It also natively processes audio-to-audio rather than using a transcription pipeline.

Q: Is Gemini 3.1 Flash Live free to use?

A: Yes, for everyday users through the Gemini app on Android and iOS, Gemini Live is free. For developers using the Gemini Live API, pricing is $0.35 per hour for audio input and $1.40 per hour for audio output — unchanged from the previous model.

Q: What languages does Gemini 3.1 Flash Live support?

A: It supports real-time conversations in over 90 languages. This multilingual capability is also what enabled Google to expand Search Live from 2 countries to more than 200 simultaneously.

Q: Is Gemini 3.1 Flash Live available to developers?

A: Yes. It is available in preview via the Gemini Live API in Google AI Studio. Developers can use it to build real-time voice and vision agents with support for tool use, function calling, session management, and bidirectional streaming.

Q: What is Search Live and where is it available?

A: Search Live lets you point your phone camera at anything and have a real-time voice conversation with Google Search about what it sees. It was previously available only in the US and India. As of March 26, 2026, it has expanded to over 200 countries and territories in 90+ languages.

Q: How does Gemini 3.1 Flash Live handle background noise?

A: The model uses native audio processing that is specifically improved for noisy environments. It filters out background sounds like traffic and television and focuses on the speaker’s voice. This makes it significantly more reliable for real-world use cases than previous models.

Q: What is SynthID watermarking?

A: SynthID is Google’s inaudible digital watermark embedded in all audio generated by Gemini 3.1 Flash Live. Humans cannot hear it, but detection software can identify it. It is designed to prevent AI-generated voice from being misused for disinformation or impersonation.

Q: How does Gemini 3.1 Flash Live compare to ChatGPT Voice Mode?

A: Gemini 3.1 Flash Live has a strong advantage in ecosystem integration — full native access to Google Search, global visual search via Search Live, and leading benchmark scores for multi-step tool use. ChatGPT Advanced Voice Mode remains competitive for conversational fluidity and raw response speed.